Does Milla Jovak Vicki Use AI to Create a “Perfect Score Project”? Developers Tested: Is It Truly Solid Content or Exaggerated Hype?

Mila Jovovich’s AI memory system MemPalace—developed in participation with Vicki—claimed it scored a perfect 100 in testing and went viral, only to be called out by the community for allegedly cheating in the tests and misleading the data. Real-world testing found that the results were exaggerated and included many errors. The team has admitted the flaws and is working on fixes.

Mila Jovovich builds an AI memory palace, drawing outside attention

Yesterday (4/7), there was a big piece of news in the AI community: Hollywood star Mila Jovovich—famous for Resident Evil and The Fifth Element—along with developer Ben Sigman used Claude Code to assist development to create the open-source AI memory system “MemPalace.”

For a while, the claim that “Hollywood megastar crosses over and delivers a perfect-score project” spread widely. To date, MemPalace has also received more than 20k stars on GitHub, but it didn’t take long for the developer community to start questioning: Is there real substance—or is this just hype?

First, let’s talk about the motivation behind MemPalace’s creation. Official documentation says it aims to address a limitation in current AI systems: the content of users’ conversations with AI, the decision-making process, and architectural discussions typically disappear after a work session ends, causing months of effort to be wiped out.

To solve this problem, MemPalace uses a spatial architecture to store memories—clearly categorizing information into wing areas representing people or projects, as well as into structures at different levels such as hallways, rooms, and drawers—while preserving the original conversation text for later semantic retrieval.

The development team claims that MemPalace achieved a perfect score of 100% in the long-term memory evaluation benchmark LongMemEval, and reached 96.6% accuracy without calling any external API. It can run entirely locally, without the need to subscribe to cloud services, and comes with an alleged AAAK dialect system that can reach 30x lossless compression.

Image source: GitHub American film star Mila Jovovich builds an AI memory palace, drawing outside attention

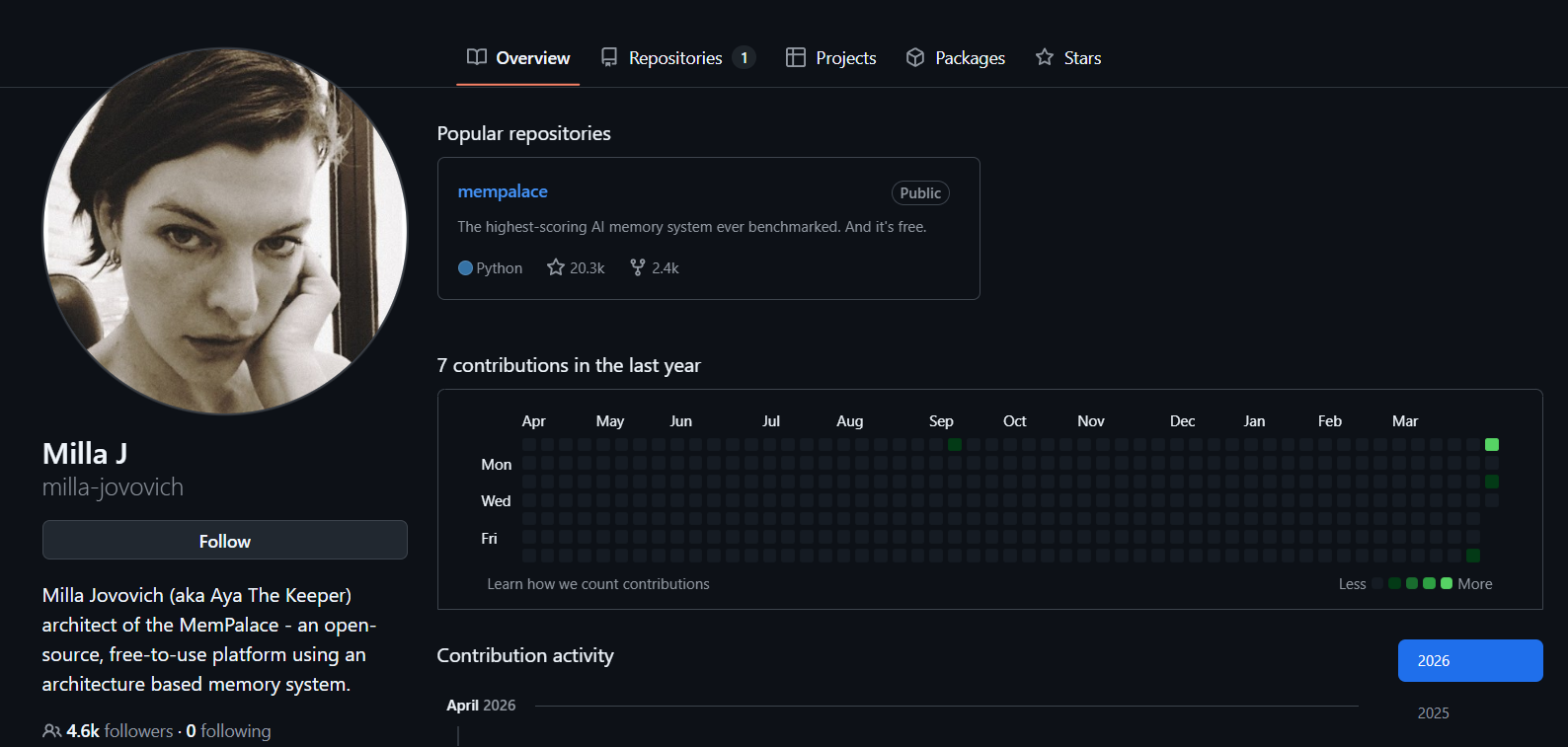

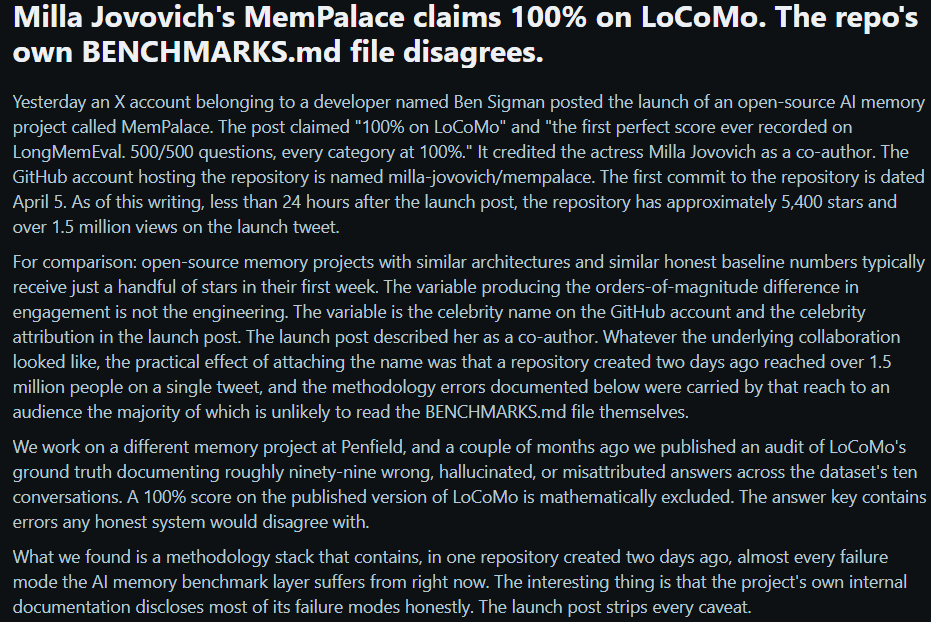

Peers and the community raise simultaneous questions about quality—testing methods and promotional issues

However, MemPalace’s claimed perfect score on LongMemEval didn’t last long before drawing skepticism from peers.

PenfieldLabs, which also develops AI memory systems, pointed out that MemPalace’s claim of a perfect score on the LoCoMo dataset is mathematically impossible, because the dataset’s standard answers themselves already include 99 errors.

PenfieldLabs’ analysis found that MemPalace’s 100% score came from setting the retrieval count to 50 times, but the highest stage count of the test dataset dialogues is only 32. This means the system effectively bypassed the retrieval stage and handed all data directly to the AI model to read.

Regarding LongMemEval’s 100% score, the development team was found to have focused on three specific problems that were prone to being wrong during development, wrote dedicated repair code, and there are suspicions that the fixes were tailored to cheat on the test set.

Image source: Reddit Peers like PenfieldLabs point out that MemPalace’s claim of a perfect score on the LoCoMo dataset is mathematically impossible

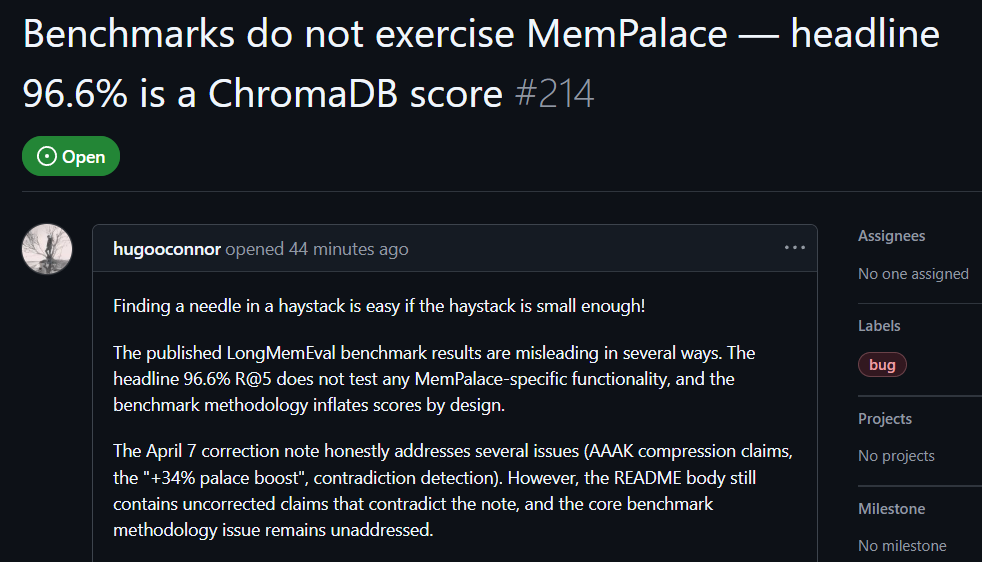

GitHub users’ real-world testing shows the benchmark test has misleading elements

After testing, GitHub user hugooconnor commented that although MemPalace claims a retrieval accuracy as high as 96.6%, it actually didn’t use the MemPalace “memory palace” architecture at all. hugooconnor said that their test simply calls the default functionality of the underlying database ChromaDB and has no involvement with the categorization logic emphasized by the project—such as wing areas, rooms, or drawers.

hugooconnor also found that when the system truly enables those dedicated classification logics of the memory palaces, retrieval performance instead declines. For example, in room mode the accuracy drops to 89.4%, and after enabling AAAK compression, accuracy falls further to 84.2%—both lower than the performance of the default database.

hugooconnor also criticized the testing approach. In MemPalace’s testing environment, the retrieval range for each question is deliberately narrowed to about 50 dialogue stages. Finding answers in such a tiny sample library is too easy.

If the range is expanded to more than 19,000 dialogue stages in real scenarios, the accuracy of traditional keyword search would plummet to 30%, indicating that MemPalace’s current testing method is masking the real search difficulty.

Image source: GitHub GitHub users’ real-world testing shows the MemPalace benchmark test has misleading elements

At the same time, although the development team has already released a correction statement admitting that the AAAK technique was indeed verified as lossy compression and promising to revise the documentation and system design according to the community’s harsh criticism, the project’s main description document still keeps multiple exaggerated claims that have not been corrected. These include claims of 30x lossless compression and a 34% retrieval improvement, and the comparison charts with other competitors also completely lack sources.

MemPalace original code faces multiple Bugs

As more and more developers download and test, a large number of bug reports regarding MemPalace’s source code have appeared on the GitHub platform.

User cktang88 listed multiple serious flaws, including compression commands that cannot run and cause the system to crash, errors in the summary word-count logic, inaccurate statistical data for excavating rooms, and the server loading all interpretation data into memory on every call, creating severe resource consumption problems.

Other issues that have been pointed out include the system hard-coding the developers’ family member names into the default configuration file, as well as a forced display limit of 10k records when checking query status.

In response to these issues, the open-source community has already begun active fixes. User adv3nt3 submitted multiplefix requests, including correcting excavation statistics, removing default family member names, and delaying the initialization time of the knowledge graph. The development team later also acknowledged these errors and is gradually resolving the code issues through community collaboration.

Mila Jovovich’s Vibe Coding is cool—the marketing isn’t

For the MemPalace project, Hacker News user darkhanakh reached a conclusion: MemPalace gives the impression of OpenClaw—that is, artificially manipulating benchmark results to make them look flawless, and then packaging it as some kind of major breakthrough to market.

He believes that although MemPalace’s underlying technology might genuinely be interesting, given that the testing methods have these kinds of flaws—and then still promoting it with “the highest public score in history”—it’s simply not appropriate. “But, about Mila Jovovich playing Vibe Coding—I think that part is still pretty cool.”

Further reading:

AI writes code and messes up! Convenience-store expiring-date app “Waste Not Hunter” explodes with security issues—GPS in the home totally exposed